#attention

Next Steps for Deep Learning

src: Quora

Deep Learning 1.0

Limitations:

- training time/labeled data: this is a common refrain about DL/RL, in that it takes an insurmountable amount of data. Contrast this with the [[bitter-lesson]], which claims that we should be utilizing computational power.

- adversarial attacks/fragility/robustness: due to the nature of DL (functional estimation), there’s always going to be fault-lines.

- selection bias in training data

- rare events/models are not able to generalize, out of distribution

- i.i.d assumption on DGP

- temporal changes/feedback mechanisms/causal structure?

Deep Learning 2.0

Self-supervised learning—learning by predicting input

- Supervised learning : this is like when a kid points to a tree and says “dog!”, and you’re like, “no”.

- Unsupervised learning : when there isn’t an answer, and you’re just trying to understand the data better.

- classically: clustering/pattern recognition.

- auto-encoders: pass through a bottleneck to reconstruct the input (the main question is how to develop the architecture)

- i.e. learning efficient representations

- Semi-supervised learning : no longer just about reconstructing the input, but self-learning

- e.g. shuffle-and-learn: shuffle video frames to figure out if it’s in the correct temporal order

- more about choosing clever opjectives to optimize (to learn something intrinsic about the data)

The brain has about 10^4 synapses and we only live for about 10^9 seconds. So we have a lot more parameters than data. This motivates the idea that we must do a lot of unsupervised learning since the perceptual input (including proprioception) is the only place we can get 10^5 dimensions of constraint per second.

Hinton via /r/MachineLearning.1 I’m not sure I follow the logic here. My guess is that you have a dichotomy between straight up perceptual input and actually learning by interacting with the world. But that latter feedback mechanism also contains the perceptual input, so it should be at the right scale.

- much more data than supervised or #reinforcement_learning

- if coded properly (like the shuffle-and-learn paradigm), then it can learn temporal/causal notions

- utilization of the agent/learner to direct things, via #attention, for instance.

Figure 1: Newborns learn about gravity at 9m. Pre: experiment push car off and keep it suspended in air (with string) won’t surprise. Post: surprise.

- case study: BERT. self-supervised learning, predicting the next word, or missing word in sentence (reconstruction).

- not multi-sensory/no explicit agents. this prevents it from picking up physical notions.

- sure, we need multi-sensory for general-purpose intelligence, but it’s still surprising how far you can go with just statistics

- not multi-sensory/no explicit agents. this prevents it from picking up physical notions.

- less successful in image/video (i.e. something special about words/language).2 Could it be the fact that we deal with already highly representative objects that are already classifications of a sort, whereas everything else is at the bit/data level, and we know that’s not really how we process such things. This suggests that we should find an equivalent type of language/vocabulary for images.

- we operate at the pixel-level, which is suboptimal

- prediction/learning in (raw) input space vs representation space

- high-level learning should beat out raw-inputs3 My feeling though is that you eventually have to go down to the raw-input space, because that is where the comparisons are made/the training is done. In other words, you can transform/encode everything into some better representation, but you’ll need to decode it at some point.

Leverage power of compositionality in distributed representations

- combinatorial explosion should be exploited

- composition: basis for the intuition as to why depth in neural networks is better than width

Moving away from stationarity

- stationarity: train/test distribution is the same distribution

- they talk about IID, which is not quite the same thing (IID is on the individual sample level—the moment you have correlations then you lose IID)

- not just following #econometrics, in that the underlying distributions are time-varying though

- feedback mechanisms (via agents/society) require dropping IID (and relate to [[project-fairness]])

- example: classifying cows vs camels reduces to classifying desert vs grass (yellow vs green)

- not sure how this example relates, probably need to read [[invariant-risk-minimization]]

Causality

- causal distributed representations (causal graphs)

- allows for causal reasoning

- it’s a little like reasoning in the representation space?

Lifelong Learning

- learning-to-learn

- cumulative learning

Transformers

- references:

- lil-log

- distill

- ebook-chapter on transformers (actually this ebook isn’t great, as it ends up being more about the implementation)

- it turns out that #attention is an earlier concept, which itself is motivated by the encoder-decoder sequence-to-sequence architecture

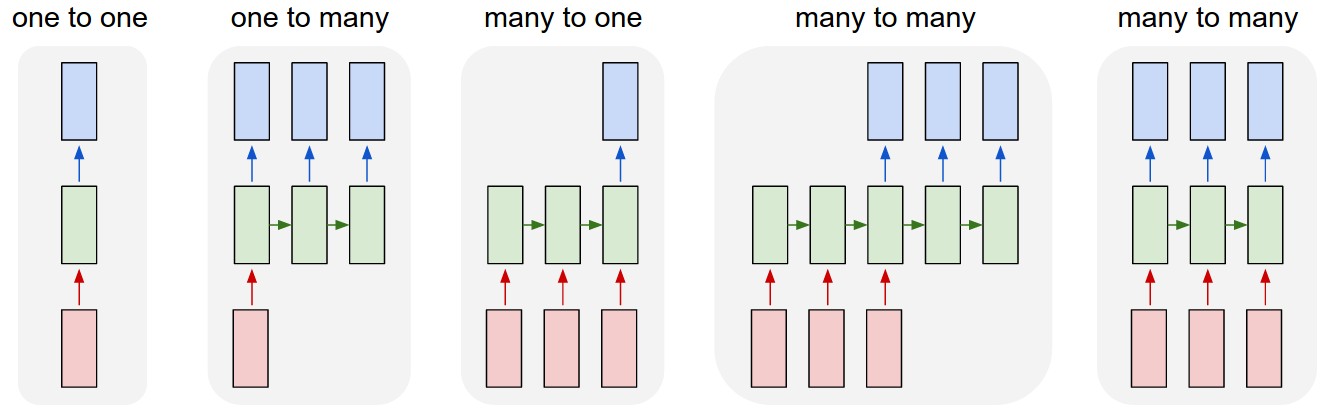

- (oops) I didn’t really understand this diagram:

- sequence to sequence models are essentially the third diagram, where basically the input sequence and output sequence are asynchronous

- versus the normal rnn, which takes the output as the input

- I really like the example of 1-to-many, image-to-caption, so your input is not a sequence, but your output is

- seq2seq example is the translation program, where importantly the input and output sequence don’t have to be the same length (due to the way languages differ in their realizations of the same meaning)

- the encoder is the rnn on the input sequence, and this culminates into the last hidden layer

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key: this acts like a sort of informational bottleneck, and actually is the impetus for the attention mechanic

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key point (via this tutorial):

- instead of having everything represented as the last hidden layer (fixed-length), why not just look at all the hidden layers (vectors representing each word)

- but, that would be variable length, so instead just look at a linear combination of those hidden layers. this linear combination is learned, and is basically attention

- Transformers #todo

- “prior art”

- CNN: easy to parallelize, but aren’t recurrent (can’t capture sequential dependency)

- RNN: reverse

- goal of transformers/attention is achieve parallelization and recurrence

- by appealing to “attention” to get the recurrence (?)

- “prior art”

Transformers

- key is multi-head self-attention

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

- corresponding to hidden states

- previous output is compressed into query \(Q \in \mathbb{R}^{m}\).

- output of the transformer is a weighted sum of the values (\(V\)).

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

Todo

- [[toread]]: Visualizing and Measuring the Geometry of BERT arXiv

- random blog

- pretty intuitive description of transformers on tumblr, via the LessWrong community

Backlinks

- [[gpt3]]

- This is a 175b-parameter language model (117x larger than GPT-2). It doesn’t use SOTA architecture (the architecture is from 2018: 96 transfomer layers with 96 attention heads (see [[transformers]])1 The self-attention somehow encodes the relative position of the words (using some trigonometric functions?).), is trained on a few different text corpora, including CommonCrawl (i.e. random internet html text dump), wikipedia, and books,2 The way they determine the pertinence/weight of a source is by upvotes on Reddit. Not sure how I feel about that. and follows the principle of language modelling (unidirectional prediction of next text token).3 So, not bi-directional as is the case for the likes of #BERT. A nice way to think of BERT is a denoising auto-encoder: you feed it sentences but hide some fraction of the words, †and BERT has to guess those words. And yet, it’s able to be surprisingly general purpose and is able to perform surprisingly well on a wide range of tasks (examples curated below).

Episode with Ilya

source: AI Podcast

- the recent breakthrough for NLP has been #transformers. it turns out that the key contribution is not just the notion of #attention, but that it’s removed the sequential aspect of

RNN, which allows for much faster training (and for some reason this allows for more efficient GPU training).- which, at a higher level, simply means that if you can train larger versions of these deep learning models, they will oftentimes just do better

- he makes a claim about how, when training a #language_model with an LSTM, if you increase the size of the hidden layer, then eventually you might get a hidden node that is a sentiment node. in other words, in the beginning, the model is capturing more lower-level features of the data. but as you increase the capacity of the model, it’s able to capture more high level concepts, and it does so naturally, just given the model capacity.

- which essentially argues for just having larger and larger models, as you’ll just get more emergent behaviour