#machine_learning

Explainable Trees

To Read:

Dataset Bias

src: (Tommasi et al. 2015Tommasi, Tatiana, Novi Patricia, Barbara Caputo, and Tinne Tuytelaars. 2015. “A Deeper Look at Dataset Bias.” arXiv.org, May. http://arxiv.org/abs/1505.01257v1.)

Machine learning fundamentally operates by finding patterns in datasets.1 I always knew that datasets were biased (especially given the whole fairness problem), and this leads to various problems, but I didn’t realize this was a whole field of study. Fascinating. As such, the particulars of the dataset that you train on will affect what possible models can be learned.

Focusing on visual data for the moment, it is clear that, even while we are in the era of big data, most datasets cannot possibily capture every possible facet of visual information,2 which ties into the problem of self-driving cars whereby your dataset can’t possibly have every single possible circumstance, and thus it is these way off in the tail situations that cause the most headache, much like what people like Taleb always talk about. and so someone has to contend with the ways in which there are blind spots or biases as a result of the particular curation of data.

Causes:

- capture bias: thigs like POV, lighting condition, actual device used;

- label bias: this is mainly a problem with supervised learning, as you often have explicit labels (maybe with a bounding box), and there is no notion of ambiguity or subtlety in the labels;

- negative bias: which again is about supervised learning and simply noting all other possible categories not in the dataset.

So now we know that we have all these problems with the coverage of the dataset. Ultimately though, the actual thing we care about is generalization performance, or basically how well this does out-of-sample. After all, even if your model has all these issues, if it is intelligent enough to do well on out-of-sample things, then its sort of a moot point.

Key terms:

- domain shift: general term to describe the fact that the training data probability model \((X,Y)\) changes from train to test. In particular, it encompasses changes in \(P(Y \given X)\), \(P(X)\) or even \(P(Y,X)\).

- covariate shift: when the distribution of the covariates change

- theory: source (\(S\)) and target (\(T\)) operate in the same space (\(X\) input, \(Y\) output). conditional distributions are the same: \(P_S(Y \given X) = P_T(Y \given X)\) (which essentially says that the model is intact); but marginal distribution has changed: \(P_S(X) = P_T(X)\).

- sample selection bias: same thing as covariate shift?? in my mind sample selection bias is more specific case of covariate shift, that happens as a result of improper selection, whereas covariate shift can be something natural.

- class imbalance: specific to classification, when you have poorly represented classes (so here we’re talking about the \(Y\)’s)

A Statistician’s View

I think ML people take a very practical view of this problem. Yes, there is talk of conditional/marginal, but I think those are ultimately just convenient words. Statisticians rarely worry about all these problems, mainly because oftentimes the data is observational, as opposed to being curated for the purpopses of training a model. This here is another difference between [[statistics-vs-ml]].

Transformers

- references:

- lil-log

- distill

- ebook-chapter on transformers (actually this ebook isn’t great, as it ends up being more about the implementation)

- it turns out that #attention is an earlier concept, which itself is motivated by the encoder-decoder sequence-to-sequence architecture

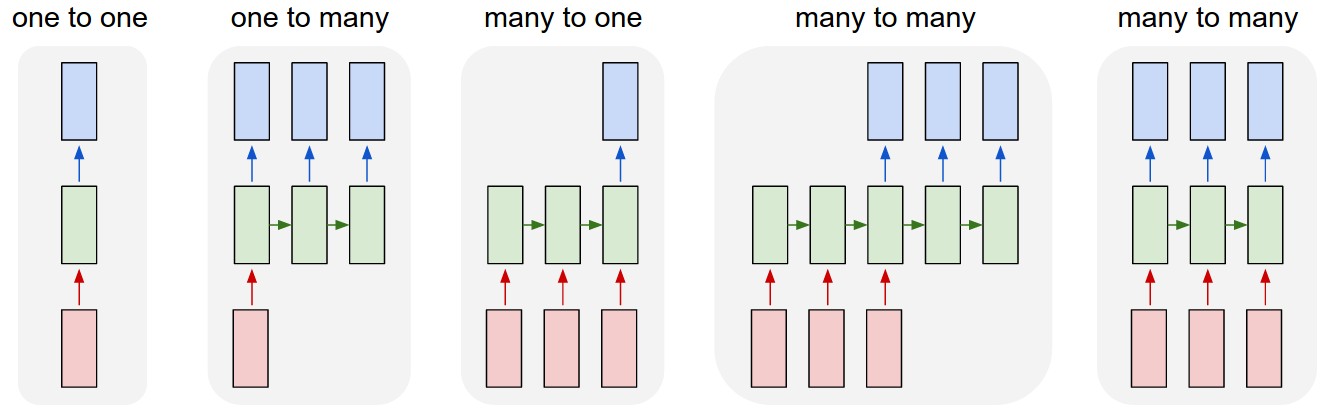

- (oops) I didn’t really understand this diagram:

- sequence to sequence models are essentially the third diagram, where basically the input sequence and output sequence are asynchronous

- versus the normal rnn, which takes the output as the input

- I really like the example of 1-to-many, image-to-caption, so your input is not a sequence, but your output is

- seq2seq example is the translation program, where importantly the input and output sequence don’t have to be the same length (due to the way languages differ in their realizations of the same meaning)

- the encoder is the rnn on the input sequence, and this culminates into the last hidden layer

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key: this acts like a sort of informational bottleneck, and actually is the impetus for the attention mechanic

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key point (via this tutorial):

- instead of having everything represented as the last hidden layer (fixed-length), why not just look at all the hidden layers (vectors representing each word)

- but, that would be variable length, so instead just look at a linear combination of those hidden layers. this linear combination is learned, and is basically attention

- Transformers #todo

- “prior art”

- CNN: easy to parallelize, but aren’t recurrent (can’t capture sequential dependency)

- RNN: reverse

- goal of transformers/attention is achieve parallelization and recurrence

- by appealing to “attention” to get the recurrence (?)

- “prior art”

Transformers

- key is multi-head self-attention

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

- corresponding to hidden states

- previous output is compressed into query \(Q \in \mathbb{R}^{m}\).

- output of the transformer is a weighted sum of the values (\(V\)).

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

Todo

- [[toread]]: Visualizing and Measuring the Geometry of BERT arXiv

- random blog

- pretty intuitive description of transformers on tumblr, via the LessWrong community

Backlinks

- [[gpt3]]

- This is a 175b-parameter language model (117x larger than GPT-2). It doesn’t use SOTA architecture (the architecture is from 2018: 96 transfomer layers with 96 attention heads (see [[transformers]])1 The self-attention somehow encodes the relative position of the words (using some trigonometric functions?).), is trained on a few different text corpora, including CommonCrawl (i.e. random internet html text dump), wikipedia, and books,2 The way they determine the pertinence/weight of a source is by upvotes on Reddit. Not sure how I feel about that. and follows the principle of language modelling (unidirectional prediction of next text token).3 So, not bi-directional as is the case for the likes of #BERT. A nice way to think of BERT is a denoising auto-encoder: you feed it sentences but hide some fraction of the words, †and BERT has to guess those words. And yet, it’s able to be surprisingly general purpose and is able to perform surprisingly well on a wide range of tasks (examples curated below).

Troubling Trends in Machine Learning Scholarship

src: (Lipton and Steinhardt 2019Lipton, Zachary, and Jacob Steinhardt. 2019. “Troubling Trends in Machine Learning Scholarship.” Queue, February.)

Spurious Theorems

Spurious theorems are common culprits, inserted into papers to lend authoritativeness to empirical results, even when the theorem’s conclusions do not actually support the main claims of the paper.

This is a perfect description of a lot of theorems in machine learning papers (and, for that matter, statistics papers too). As a recent example, Theorem 2 in (Arora, Cohen, and Hazan 2018Arora, Sanjeev, Nadav Cohen, and Elad Hazan. 2018. “On the optimization of deep networks: Implicit acceleration by overparameterization.” In 35th International Conference on Machine Learning, Icml 2018, 372–89. Institute for Advanced Studies, Princeton, United States.) is border-line spurious.

Backlinks

- [[on-the-optimization-of-deep-networks]]

- this proof felt a little spurious [[troubling-trends-in-machine-learning-scholarship]]

Next Steps for Interpolation

Let’s record the kinds of experimental data that we are interested in for [[project-interpolation]].

- Replicating what’s been shown in the literature

- In the Zhang et al. (2019Zhang, Chiyuan, Benjamin Recht, Samy Bengio, Moritz Hardt, and Oriol Vinyals. 2019. “Understanding deep learning requires rethinking generalization.” In 5th International Conference on Learning Representations, Iclr 2017 - Conference Track Proceedings. University of California, Berkeley, Berkeley, United States.) paper, they show that if you randomize the training data labels (in a classification problem), then you can still get zero training loss.

- the point here is that the classical ideas of understanding the performance of machine learning methods (VC dimension) break down1 Though, I always get confused about this. Probably should reread one of the blog posts of Aroras..

- In some more recent work (Belkin et al. 2019Belkin, Mikhail, Daniel Hsu, Siyuan Ma, and Soumik Mandal. 2019. “Reconciling modern machine-learning practice and the classical biasvariance trade-off.” Proceedings of the National Academy of Sciences 116 (32): 15849–54.), it was cited that if you randomize some of the training labels, you can basically still get the same generalization performance.

- unclear to me if these two results are the same.

- I vaguely remember there was some place that talked about the relevance to #robustness or #adversarial_training.

- establish that for standard problems (like CIFAR/MNIST/*), if you perturb the data (some percentage, or be clever about picking the points), then you’re going to maintain test performance.

- New Ideas

- We conjecture that what’s going on is that there

- show that this