202006131504

Transformers

tags: [ neural_networks , machine_learning , _todo , _attention ]

- references:

- lil-log

- distill

- ebook-chapter on transformers (actually this ebook isn’t great, as it ends up being more about the implementation)

- it turns out that #attention is an earlier concept, which itself is motivated by the encoder-decoder sequence-to-sequence architecture

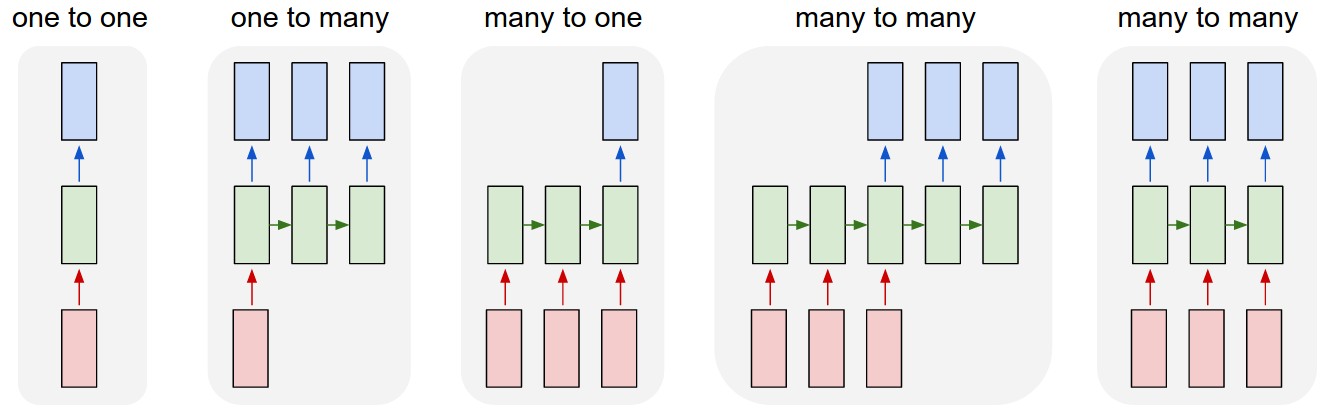

- (oops) I didn’t really understand this diagram:

- sequence to sequence models are essentially the third diagram, where basically the input sequence and output sequence are asynchronous

- versus the normal rnn, which takes the output as the input

- I really like the example of 1-to-many, image-to-caption, so your input is not a sequence, but your output is

- seq2seq example is the translation program, where importantly the input and output sequence don’t have to be the same length (due to the way languages differ in their realizations of the same meaning)

- the encoder is the rnn on the input sequence, and this culminates into the last hidden layer

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key: this acts like a sort of informational bottleneck, and actually is the impetus for the attention mechanic

- this is essentially the context vector: the idea here is that this (fixed-length) vector captures all the information about the sentence

- key point (via this tutorial):

- instead of having everything represented as the last hidden layer (fixed-length), why not just look at all the hidden layers (vectors representing each word)

- but, that would be variable length, so instead just look at a linear combination of those hidden layers. this linear combination is learned, and is basically attention

- Transformers #todo

- “prior art”

- CNN: easy to parallelize, but aren’t recurrent (can’t capture sequential dependency)

- RNN: reverse

- goal of transformers/attention is achieve parallelization and recurrence

- by appealing to “attention” to get the recurrence (?)

- “prior art”

Transformers

- key is multi-head self-attention

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

- corresponding to hidden states

- previous output is compressed into query \(Q \in \mathbb{R}^{m}\).

- output of the transformer is a weighted sum of the values (\(V\)).

- encoded representation of input: key-value pairs (\(K,V \in \mathbb{R}^{n}\))

Todo

- [[toread]]: Visualizing and Measuring the Geometry of BERT arXiv

- random blog

- pretty intuitive description of transformers on tumblr, via the LessWrong community

Backlinks

- [[gpt3]]

- This is a 175b-parameter language model (117x larger than GPT-2). It doesn’t use SOTA architecture (the architecture is from 2018: 96 transfomer layers with 96 attention heads (see [[transformers]])1 The self-attention somehow encodes the relative position of the words (using some trigonometric functions?).), is trained on a few different text corpora, including CommonCrawl (i.e. random internet html text dump), wikipedia, and books,2 The way they determine the pertinence/weight of a source is by upvotes on Reddit. Not sure how I feel about that. and follows the principle of language modelling (unidirectional prediction of next text token).3 So, not bi-directional as is the case for the likes of #BERT. A nice way to think of BERT is a denoising auto-encoder: you feed it sentences but hide some fraction of the words, †and BERT has to guess those words. And yet, it’s able to be surprisingly general purpose and is able to perform surprisingly well on a wide range of tasks (examples curated below).